Do you know the most popular data migration tool types and the differences between them? Let’s say! Although there are many different kinds of data migration tools, some of the more well-liked ones are;

- Tools for data replication,

- Data migration,

- Extract, transform, and load (ETL).

ETL tools are made to take data from one system, change it into a format that another system can use, and then load that data into the other system. Data replication tools often replicate data in real-time from one system to another. Data extraction, transformation, and loading tools, as well as supplementary features like data quality control and data governance, are all included in data migration tools’ comprehensive approach to data mobility.

The most effective data migration applications offer a straightforward method for moving your data from one PC to another. If you’ve never migrated data, the entire procedure may seem frightening. Whether you utilize the service for personal or professional purposes has no bearing. However, you should use the finest data transfer solution for the job, whether you’re moving data for security, backup, or upgrading to the newest operating system. Different software packages handle data migration in various ways, from downsizing hard disks to fitting them on quick SSD drives to completely cloning and migrating operating systems, eliminating the need to reinstall apps. Examining the qualities that each solution offers is the quickest approach to determine which will best meet your objectives. We will provide information regarding data migration tools in this post. Let’s start!

A Short Overview of Data Migration

Data migration, as its name implies, is the process through which data is moved across systems. It might be varied depending on the setting, the format, or the application. File formats or data storage types can be used as these transmission mechanisms. Preparation, transforming, and extracting the data are all possible steps in this one-time data transmission procedure. Using a predetermined mapping pattern, data is moved from the old system to the new system. Data migration should ideally be accomplished without any data loss and with the least amount of human data regeneration or alteration. The finest data migration software offers a straightforward method to move your data from one PC to another. Data migration may be done by an organization for the following reasons:

- Setting up a new data warehouse

- Restructuring a complete system

- Database modernization

- Combining fresh information from an acquisition

- installing more systems

What are Data Migration Tools?

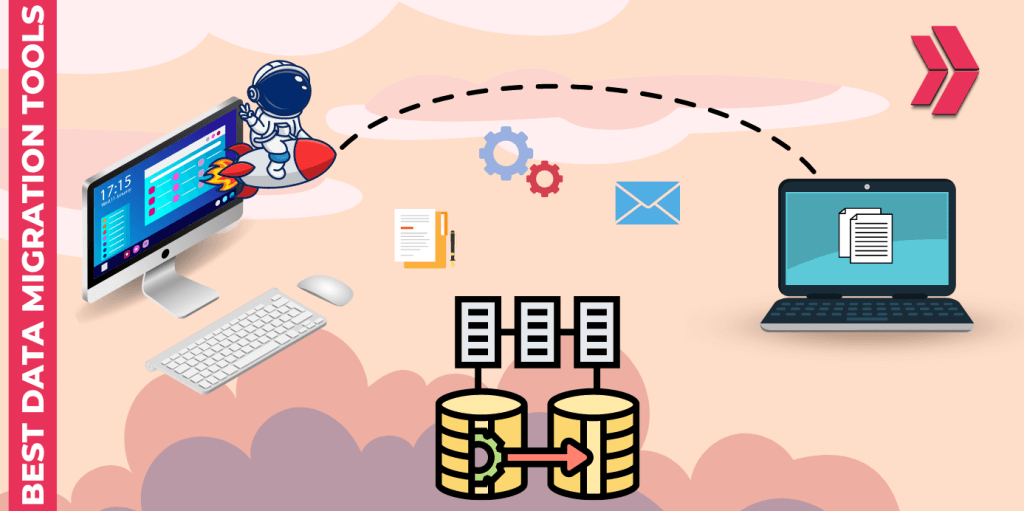

Three different categories of data migration tools exist. These are Self-Scripted, On-Premises, and Cloud-Based data migration tools.

Open Source(Self-Scripted) Tools:

Self-Scripted Data Migration is a house-made solution that could work for minor jobs and short changes but is not scalable for bigger jobs. When a certain source or destination is not supported by other tools, they can also be utilized. Self-Scripted data migration tools may be created very rapidly, but they demand in-depth coding expertise.

On-Premise Tools:

Rather than using the cloud, on-premises data migration technologies are utilized to transfer data between local servers or databases. Data migration servers employ on-premise data migration tools to clean, monitor, and alter data before it is entered into the computer system. On-premise Data Migration Tools aid in connecting the server and computer systems. The ideal option for you is on-premises data migration software if all of your data is already in one place. On-premises solutions are helpful for static data requirements if there are no plans for growth.

When multi-tenant or cloud-based data migration tools are prohibited by compliance regulations, this is the best solution. Low latency and total stack control from the app to the physical layers are provided. This calls for the maintenance of these tools, nevertheless.

Cloud-Based Tools:

These are used to move legacy data to systems that are better equipped for quick analysis, such as massive data lakes or cloud warehouses. Technologies for cloud-based data migration may be helpful for businesses migrating their data from a variety of technologies and resources to a cloud-based destinations.

Why is Data Migration Needed?

Any time we need to move data between systems, it may be necessary to do data migration. Frequently noted causes include:

- Migration of applications.

- Upgrade or maintenance tasks

- Replacement of storage/server equipment.

- Relocation or data center move.

- website consolidation, etc.

How is Data Migration Done?

Data migration is a time-consuming job that would take a lot of labor to do manually. As a result, it has been programmed and mechanized with the use of tools created specifically for the task. Programmatic data transfer is also known as “data extraction from the old system,” “data loading to the new system,” and “data verification.”

Open Source Data Migration Tools

Azure DocumentDB

Customers may migrate their documents to DocumentDB, a NoSQL document database, using Microsoft’s Azure DocumentDB, an open-source tool for data transfer. It is an entirely manageable, serverless database with built-in scalability.

Key Features:

Here are a few of Azure DocumentDB’s key characteristics:

- It supports a wide range of data sources, such as SQL, Azure DocumentDB, MongoDB, Azure Table Storage, HBase, and Amazon DynamoDB, as well as CSV and JSON files.

- Numerous operating systems and .NET frameworks are supported.

- The greatest real-time streaming data services are offered by this.

Azure Cosmos DB

A wide range of data sources, including SQL Server, Table Storage, MongoDB, Amazon DynamoDB, CSV files, and JSON files are supported by its architecture. You may migrate data to Azure Cosmos DB from a variety of sources using the free, open-source Azure Cosmos DB data migration tool, which is a command-line tool. It works well for modest migration plans.

Key Features:

The functionalities offered by the Azure Cosmos DB Data Migration Tool include:

- Support for data migration from a range of sources, including CSV files, JSON files, Table Storage, MongoDB, SQL Server, and Amazon DynamoDB.

- The capacity to map data from a source to the relevant Cosmos DB SQL API collection.

- The capacity to filter information while moving.

- The capacity to keep track of the migration’s development.

- Having the option to stop the migration at any point.

Flyway

With its command-line client, which also happens to be an API, Flyway is an open-source database migration tool that enables users to transfer data. It utilizes fundamental commands including data migration, data cleaning, data validation, undo modifications, baseline creation, and repair.

Key Features:

- Users have the option of writing migrations in Java or SQL.

- Numerous databases, like MySQL, SQL Server, Oracle, and DB2, are supported.

- plugins for platforms like Play, Grails, Spring Boot, etc.

TiDB

TiDB is a distributed, open-source HTAP (hybrid transactional and analytical processing) database. The TiDB Data Migration Program (DM) is an open-source tool that aids in the transfer of data from MySQL/MariaDB to TiDB.

Key Features:

- For flexible deployment and operation, it is built to operate on a cloud platform.

- OLTP and OLAP workload support.

- By utilizing the Raft consensus technique, it guarantees data accessibility.

Ladder

Another free database migration tool is called Ladder. It is written in PHP 5 and supports the MySQL database server. By integrating it with source code, it may be used to track database changes alongside source code.

Key Features:

The following are a few of the ladder’s most crucial attributes:

- Users can change, add, or remove columns.

- During the rollback process, metadata is kept and used.

- Indexes or restrictions can be added or removed by users.

On-Premise Tools

On-premise solutions are made to transfer data between two or more servers or databases inside of a large or medium-sized enterprise/network without moving it to the cloud. These solutions are ideal if you’re shifting data warehouses, altering the location of your main data storage, or just combining data from several sources on-premise. Due to security constraints, some businesses choose on-premise solutions.

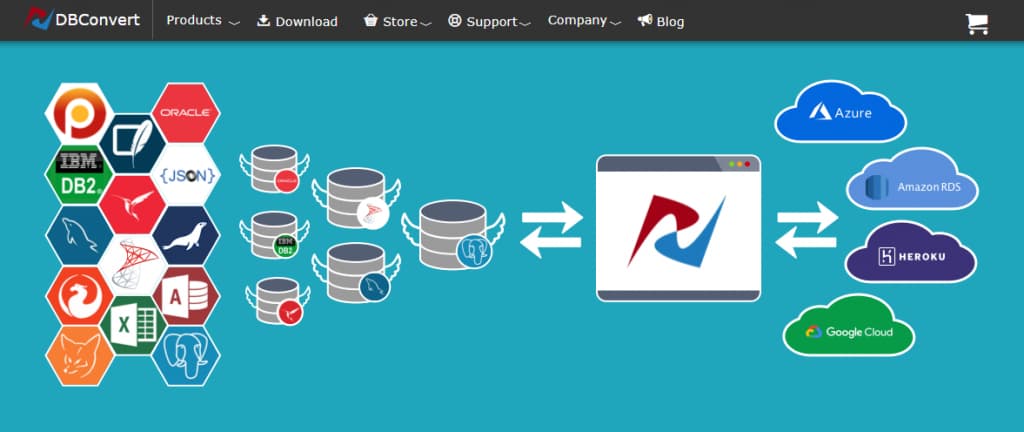

DBConvert Studio

The best tool for database migration and synchronization is DBConvert Studio by SLOTIX s.r.o. The top 10 on-premises databases, such as SQL Server, MySQL, PostgreSQL, Oracle, and others, are supported. It may make sense to move databases to one of the following cloud computing platforms for exceptionally big data storage volumes: Heroku Postgres, Google Cloud SQL, MS Azure SQL, and Amazon RDS/ Aurora.

Key Features:

- Three different data migration scenarios are conceivable: bidirectional synchronization, Source to Target migration, and One-Way synchronization.

- During migration, every database item might have its name changed.

- All target tables and individual tables can have their data types mapped.

- The required information may be retrieved from the source database using filters.

- It is possible to transfer the source table to an existing target table.

- Without the GUI running, tasks may be launched at a given time using the flexible built-in Scheduler.

Microsoft SQL Server

Microsoft SQL Server enables developers to construct intelligent applications utilizing useful languages and environments by bringing SQL Server functionality to Windows, Docker containers, and Linux. The data integration features of Microsoft SQL Server are available both locally and online (via Integration Platform as a Service). The platform for the SQL Server DBMS comes with the company’s standard integration tool, SQL Server Integration Services (SSIS). Microsoft Flow and Azure Logic Apps are two other cloud SaaS solutions that Microsoft promotes. The integrator-centric and ad hoc Flow is part of the whole Azure Logic Apps offering.

Key Features:

- This program offers its users performances that are among the best in the market.

- Offers cutting-edge security features and services.

- With AI’s built-in features, you can alter your company.

- With mobile BI, this solution provides insights wherever the users are.

Software AG

Software AG is a market leader in integration and API management software that provides businesses with a broad range of capabilities for the quick delivery of innovative digital transformation initiatives. It offers a variety of skills to integrate and manage data on mainframe, mid-tier, desktop, or cloud systems. It is an experienced veteran of the data integration industry.

Data may be accessed in real-time and a single view of many databases can be created using a variety of database drivers provided by Software AG. Additionally, the system offers additional data virtualization capability and streamlined SQL access to over 150 data sources.

Key Features:

- You can manage APIs and B2B transactions as well as seamlessly combine your on-premises and SaaS apps with this solution.

- You are able to completely manage your APIs, protect them from attacks, adhere to your SLAs, and make money off of your data.

- It aids in making it simple for you to send business papers electronically to clients and partners.

- This program allows users to swiftly connect apps to the cloud.

- Using this gateway, partners may integrate with industry standards like X12 and EDIFACT, among others.

Cloud based data migration tools

Integrate.io

Integrate.io is a platform for combining data in the cloud. It serves as an all-inclusive toolbox for creating data pipelines. As well as customer support, marketing, and sales, it provides services for developers. The retail, hotel, and advertising sectors can use these solutions. Using Integrate.io, you may access more than 140 platforms, data stores, and cloud – based data sources. With a drag and drop, no-code interface, users may access a wide variety of SaaS apps and services. With no maintenance, it automatically manages all future adjustments. Integrate.io is scalable and elastic.

Key Features:

- Integrate.io offers tools for simple migrations. You may move more easily to the cloud thanks to it.

- The functionality to connect to older systems is provided by Integrate.io.

- You may move data from on-premise, older systems with its assistance and connect to them quickly.

- It works with SQL, Oracle, Teradata, DB2, and SFTP servers.

AWS Data Migration

The most effective tool for cloud data conversion is Amazon’s AWS Data Migration. It facilitates a safe and simple database migration to AWS.

Key Features:

Both homogeneous and heterogeneous migrations, such as those from Oracle to Oracle or Oracle to Microsoft SQL, are supported by the AWS data migration tool.

- It reduces application downtime to an impressive degree.

- It allows the source database to continue operating normally throughout the migration procedure.

- It is a very adaptable tool that can move data across the most popular open-source and commercial databases.

- Its high availability makes it suitable for continual data moves.

Matillion

A cloud-based ETL tool called Matillion facilitates your data journey by moving, extracting, and converting your data there. This enables you to discover fresh information and arrive at wiser judgments.

Key Features:

Matillion has the following salient characteristics:

- Matillion’s Transformation Components offer post-load transformations. Any user can construct a transformation component by typing SQL queries or by selecting options with the mouse. At a given location along the data pipeline, you may then drag any component into Matillion’s visual workspace.

- Matillion provides training as well as support through a ticketing system that can be reached by email or through its support site. The articles that may be found on the support portal are what the documentation is based on. The lesson videos are available on Matillion’s YouTube account. The business does not, however, provide training services.

- In 7 major categories—ERP, finance, social networks, databases, online resources, CRM, marketing communications, files, and document formats—Matillion interacts with around 60 data sources. The organization will create additional data sources per customer request. Outsiders are not permitted to develop new data source integrations or enhance the current resources under Matillion. Matillion supports the destinations Google BigQuery, Amazon Redshift, and Snowflake.

Stitch Data

With the help of the cloud-based ETL tool Stitch Data, you can swiftly migrate data from one location to another without writing any code. Data warehousing, ETL (extract, transform, and load), data visualization, and data security are just a few of the features that Stitch Data offers. Numerous databases, including MySQL, PostgreSQL, MariaDB, Oracle Database, Asana, and others, are integrated with this data conversion solution. This enables you to concentrate on deriving useful insights from your data to guide business expansion.

Key Features:

Here are a handful of Stitch Data’s salient attributes:

- The ELT product Stitch Data only performs the transformations necessary to make it compatible with the destination and also provides solutions for data transformation using third-party process engines like MapReduce and Apache Spark.

- Stitch Data provides all of its clients with in-app chat support. Only its enterprise clients are given phone help. The Stitch Data website offers extensive, open-source documentation.

- As data sources, Stitch Data offers a number of SaaS and database connections. Customers can expand Stitch’s sources by building it in accordance with Singer’s standards, an open-source program for creating data-moving scripts. The scheduling, monitoring, and auto-scaling capabilities of Stitch Data may be utilized on the Singer platform using Stitch Data connectors.

- It may be applied to data transformation and analytical preparation.

- It might be added to a data storage or business intelligence tool for additional analysis.

AWS Data Pipeline

AWS (Amazon Web Services) is home to a variety of cloud-based data management technologies. Data transport is the main emphasis of the AWS Data Pipeline. AWS Glue is another essential AWS service that focuses on transferring data from sources to analytical destinations. ETL is the main area of interest for AWS Glue.

Key Features:

The AWS Data Pipeline has the following salient characteristics:

- SQL commands are supported for preload modifications via AWS Data Pipeline.Through a terminal and the AWS Command Line Interface (CLI), you may use it to visually design a pipeline. The pipeline definition must be in JSON format for this. A pipeline can also be built programmatically via API calls.

- Through a ticketing system and a knowledge base, AWS offers online assistance. There may be chat or phone answers to these support tickets. For its operation, the AWS Data Pipeline provides extensive documentation. AWS Data Pipeline also provides electronic training resources in addition to this.

- SQL, DynamoDB, Redshift tables, and S3 locations are the four types of data nodes that AWS Data Pipeline supports as sources and destinations. SaaS data sources are not yet supported by AWS Data Pipeline.

SnapLogic

Users may link data from many sources using the cloud-based data transfer application Snaplogic. Its drag-and-drop interface makes creating data pipelines simple, and its machine learning capabilities allow users to map data fields across multiple data sources.

Key Features:

- Using a drag and drop interface, pipeline building is simple.

- Connections already constructed for well-known data sources and applications.

- Skills for transforming and enhancing data.

- Monitoring in real-time and notifications.

- A vast repository of pipelines built by the community.

Hevo Data

Hevo Data is a cloud-based data transfer application that aids in the collection, preparation, and analysis of data for businesses. Hevo Data offers a comprehensive solution for data warehousing, data lakes, and data science. Hevo Data migration tools‘ serverless architecture is built on top of the cloud-native Apache Hadoop and Spark ecosystems. The platform from Hevo Data has a pay-as-you-go pricing structure and is completely managed.

Key Features:

Hevo Data’s main attributes include;

- To assemble data from a broad range of sources,

- Data transformation and purification,

- For data to be loaded into a data warehouse,

- Management of flexible schemas,

- Formatter for in-flight data.

Fivetran

Fivetran, a cloud-based data migration program, is used to automate the extraction, transformation, and loading (ETL) of data from disparate sources into a centralized data warehouse. In addition to having ready-made connections for well-known data sources like Salesforce, Amazon Redshift, Google Analytics, and PostgreSQL, it may be tailored to operate with any additional data source. By doing away with human data manipulation, Fivetran makes it simple to maintain an accurate and up-to-date data warehouse.

Key Features:

- It has the capacity to connect to multiple data sources fast and simply.

- Several different data types are supported by it.

- It can create reports and visuals automatically.

- It has the capacity to transform data.

- It is capable of loading data.

- It is capable of data synchronization.

- It is capable of protecting data.

How to Select a Data Migration Tool?

A thorough data migration approach avoids a subpar experience that can wind up causing more issues than it resolves. The failure of a data migration project may be due to an inadequate plan. This approach includes choosing a data migration solution, and that decision should be based on the organization’s business needs. You should consider the following things before choosing a data migration tool:

Location

Do you desire to maintain your data locally (within the same setting)? Are you more interested in transferring data from on-premises to the cloud? or from one supplier of cloud storage to another? This will assist you in choosing the tool group to take into consideration.

Business Necessities and Probable Effects

What type of timetable is required for migration? When will the lease on a data center expire if it is being decommissioned? Throughout the relocation process, what kinds of data security must you uphold? Is there a certain amount of data loss or corruption that may be tolerated? What impact will delays or unforeseen obstacles have on the company?

Data Volume

How much data must be transferred? Regardless of whether the data is less than ten terabytes, shipping the data to its new storage location on a client-supplied storage device is often the easiest and most economical method (TB). A specialized data migration device provided by your cloud provider may be the most practical and cost-effective choice for transfers involving larger amounts of data.For example, up to multiple petabytes (PB) of data. As an alternative, you may quickly and easily move and ingest large amounts of data to either a cloud data warehouse or a cloud data lake by using an online data migration tool or a cloud data ingestion tool.

Data Model

Should your data model be changed? Moving from on data store to a cloud-based one or switching from relational data to a blend of unstructured and structured data are two examples of data warehouse migrations. On-premises tools are often the least adaptable, whereas cloud-based tools enable the largest range of data models.

Data Quality

Does data cleaning, applying rules to the source data, or putting the data into the target have requirements in terms of data quality? What procedure is followed to increase data quality in order to comply with data governance rules?

Data Transformation

Does your data need to be transformed (enriched, cleaned up, merged, etc.)? You’ll mostly certainly have to change your data as part of the process of migration because you’ll be adding or changing sources of data. Data can be transformed by all data migration tools, but cloud-based tools typically have the most flexibility and support the widest variety of data types.

Reliability

Cloud-based products clearly stand out since, thanks to their highly redundant infrastructures, they experience near to 100 percent uptime.

Security

Strict security and regulatory requirements must be met by any data transfer solution. Is there any sensitive data being migrated by you? Compliance regulations that apply to sensitive data migration might be difficult to support during the process of migration. Tools that operate in the cloud are probably very secure and compliant. On-premises solutions are reliant on the infrastructure’s overall security.

Sources of Data and Destinations

What portion of the data must we move? Has the system been utilized differently or with any peculiarities? Will both environments run the same operating system? Will other formatting or database schemas need to change? Do problems with data quality need to be resolved before the migration?

Performance & Scalability

Cloud-based solutions excel in this category above the competition because of their flexibility, which enables them to scale up or down in response to user demands.

Pricing

A number of variables, including the amount and variety of data sources at the destination, as well as the service quality, might have an impact on pricing. By employing a cloud-based data transfer solution, you may be able to significantly reduce your infrastructure and personnel expenses, freeing up funds for other projects. The finest price options are offered by cloud-based solutions for the majority of data migration initiatives. Businesses can take advantage of the free tier offered by some of these products.

Conclusion

Along with a few other, similarly excellent applications that primarily address each of the migration categories, we have seen the open source,on-premises and cloud-based data migration tools. I hope that this has provided you with a clear understanding of how to select your preferred data migration tool. Companies may manage their data and reports more effectively with the aid of a good data integration program. It depends which of those instruments generates more money and value for the company or its clients, choose the optimal option.

In summary, the appropriate tool depends on the task at hand, your specific demands for database management, and different instruments are better suited for different scenarios. We told the fundamentals of data migration, including its types, how data migration is done, why data migration is needed, and the things to consider while making a choice. Join the Clarusway AWS bootcamp if you want to learn more about data migration tools. I’ll hopefully be back soon with another piece. Goodbye!